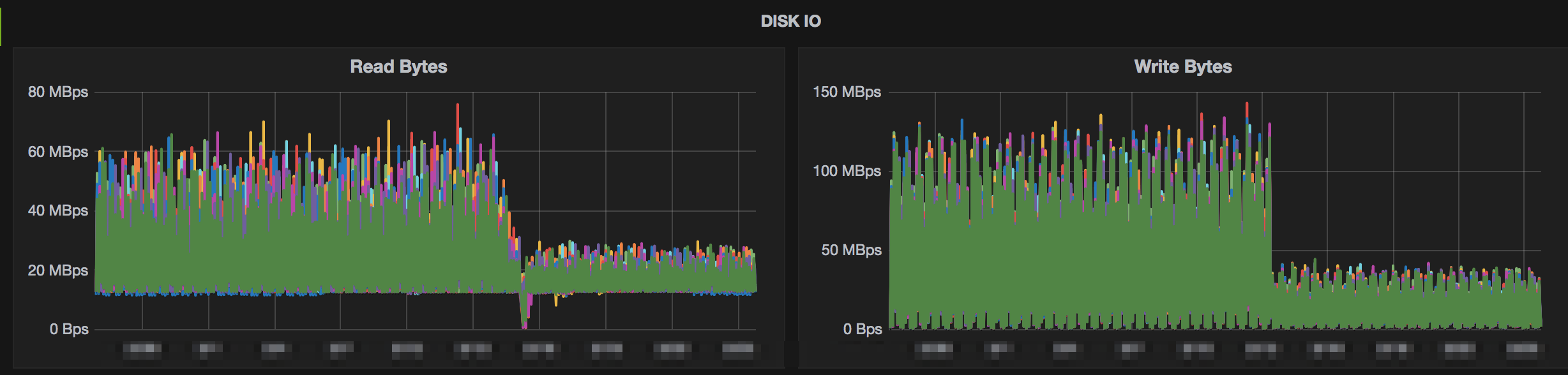

Install this package yarn add snappy Support matrixĪPI export function compressSync(input: Buffer | string | ArrayBuffer | Uint8Array): BufferĮxport function compress(input: Buffer | string | ArrayBuffer | Uint8Array): PromiseĮxport function uncompressSync(compressed: Buffer): BufferĮxport function uncompress(compressed: Buffer): Promise Performance Hardware OS: Windows 11 x86_64 But it doesn't have async API, which is important for Node.js program. □ Help me to become a full-time open-source developer by sponsoring me on Githubįastest Snappy compression library in Node.js, powered by napi-rs and rust-snappy.įor small size data, snappyjs is faster, and it support browser. More background about the 6-7 changes, please read this, Thanks. I don't know what the procedure is for other distros offhand, but hopefully this information is helpful for whoever's able to take on that work.!!! For and below, please go to node-snappy. On the assumption this was going to be the way to go, I've got the appropriate change into openSUSE for snappy. It turns out we had the exact same issue with leveldb - upstream disabled RTTI in leveldb 1.23 (see ), and openSUSE, Fedora, Arch (and I assume others, I only checked those three quickly) have since added patches to the downstream leveldb packages to re-enable RTTI. ancient single treaded programs using only one of the cores). on a laptop), least influence on the system while compressing (e.g. Upstream snappy has rejected my PR to re-enable RTTI (see )ġ) Somehow figuring out how to build at least the compression plugin bits of ceph without RTTI? Maybe? I've no idea if this is possible/viable/sensible.Ģ) Getting patches into the various linux distros to re-enable RTTI for snappy versions >= 1.1.9. Best compression can be smallest file size, fastest compression, least power used to compress (e.g. In hadoop lowest level of compression is at block level same like in existing linux systems(In.

This would ideally be fixed by getting RTTI re-enabled in snappy, so I've gone ahead and opened LZO and Snappy are both data compression algorithms.

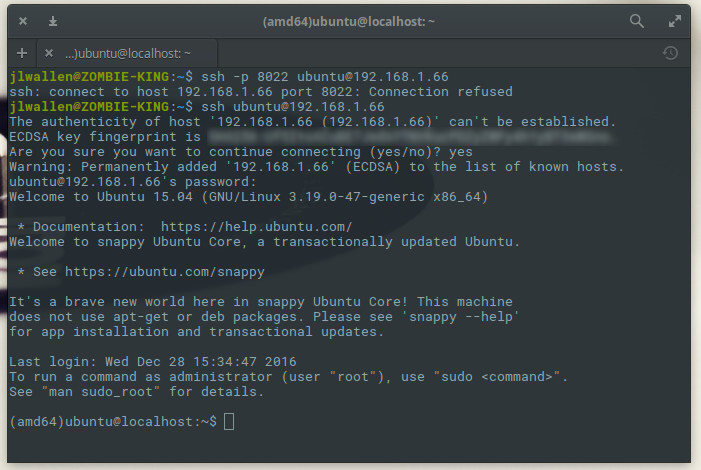

The problem only manifests when our snappy plugin is dlopen()ed at runtime, and then the linker kicks in and can't find that missing symbol. It does not aim for maximum compression, or compatibility with any other compression library instead, it aims for very high speeds and reasonable compression. Ceph still builds just fine, because the compressors are built as shared libraries. Snappy, previously known as Zippy, is a compression library used by Google in production internally by many projects including BigTable, MapReduce and RPC. Snappy is a compression/decompression library. Fastest Snappy compression library in Node.js, powered by napi-rs and. This is because RTTI was disabled in snappy 1.1.9, so the typeinfo for the snappy::Source class - which Ceph's Snapp圜ompressor creates a subclass of - isn't included in libsnappy.so. For snappy6.x and below, please go to node-snappy. Im trying to install python-snappy in Amazon Linux EC2 instance but I keep getting the below error, Any idea how to fix this issue: sudo python3.6 -m pip install python-snappy Collecting python-s. Oct 27 08:55:33 node1 ceph-osd: create cannot load compressor of type snappy The problem is that I found very few technical documentation that describes deeply the process of the algorithm (compression and dec-). Oct 27 08:55:33 node1 ceph-osd: load failed dlopen(): "/usr/lib64/ceph/compressor/libceph_snappy.so: undefined symbol: _ZTIN6snappy6SourceE" or "/usr/lib64/ceph/libceph_snappy.so: cannot open shared object file: No such file or directory" 1 I want to implement a version of snappy algorithm written in C for linux, but I need to understeand deeply how the algorithm works before reading the source-code becouse I think it's complex. The OSD logs will show something like this: ceph health detail will tell you that each of your OSDs is "unable to load:snappy". If you try to run Ceph with snappy 1.1.9 installed, ceph status will show HEALTH_WARN, and tell you that your OSDs "have broken BlueStore compression".

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed